You pay for compute hours; what you actually want is data moved. This post measures the exchange rate across the four bottlenecks that dominate real pipelines: SQL copy, REST APIs, JSON files, and Parquet

Aman Gupta

The agent wrote the pipeline, but assumptions slip silently: nulls in primary keys, duplicates from the wrong write disposition, drifted enum values. The dltHub AI Workbench data quality toolkit bootstraps checks from dlt's existing schema, samples columns before any rule ships, writes the checks into your pipeline as decorators, and routes failures back to the toolkit that owns the surface area: ingestion, transformations, or exploration.

Hiba Jamal

In public preview today as part of dltHub Pro. dltHub Transformations turns raw data into the clean tables your business and your agents actually use. Built for a moment when agents now write 9 out of 10 data pipelines.

Matthaus Krzykowski

Today's stacks split ingestion, transformation, orchestration, and the context that agents need gets lost at every boundary. dltHub Transformations runs ingestion, transformation, lineage, and verification inside the same execution context, so an LLM can reason about your business with the context a senior analyst would have.

Adrian Brudaru

One working student, Claude Code, one stakeholder call, and 2 weeks. The migration worked but the workflow we used is the actual point of this post. AI alone wouldn’t have gotten us there.

Nikolas Jack Altran

Generally available today. 91% of new dlt pipelines are now built by agents. dltHub Pro makes building and running them production-grade for any Python developer.

Matthaus Krzykowski

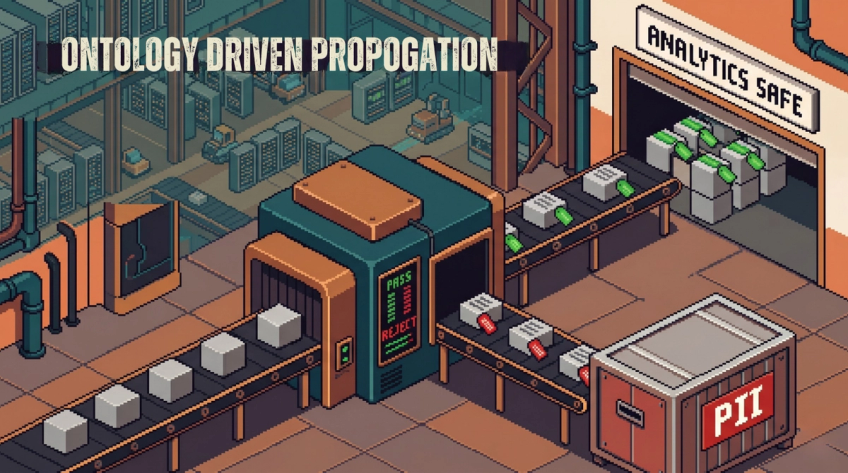

Write your access policy as a plain-English ontology. Schema evolves; the LLM reads the rules and decides.

Aman Gupta

With 91% of dlt pipelines AI-written, learn Agentic Data Engineering in this free 1-hour course.

Adrian Brudaru

AI agents can write data pipelines. The part that isn't ready is everything around them — isolation, rollbacks, safe promotion to prod. This demo shows what a stack built for agents actually looks like.

Elvis Kahoro

Agents don't hallucinate. They navigate without a map. Ontology engineering is how you build one, and why every team pulling humans out of the loop needs it now.

Adrian Brudaru

The dltHub AI Workbench gives Claude Code a structured workflow for building data pipelines. We put it to the test with a real geopolitical question.

Roshni Melwani