Blog//

Ontology driven Dimensional Modeling

Trying to force an LLM to reconstruct the 'world' using only a semantic layer is like trying to turn cheese back into milk. The information required to understand the original system was stripped away during the modeling process.

Adrian Brudaru,

Adrian Brudaru,

Co-Founder & CDO

On this page

- Why am I exploring this topic?

- Turning cheese back into milk: A human endeavor

- The Map (ontology) vs. The Territory (data model)

- What’s an Ontology?

- The relationship between ontology, knowledge graph, canonical models and dimensional models

- Semantic layers only answer “canned” or “generic” questions

- “you don’t need a semantic layer”

- What can we do with an Ontology that we can’t do with a data model?

- Implications: Upgrading the AI from Reporter to Strategist

- An experiment

- The Tale of the Two Analysts and Experimental Outcomes

- The outcome?

- What did we learn?

- Step into the future with us

Why am I exploring this topic?

The modern data stack is suffering from theoretical blindness. By over-focusing on dimensional models and semantic layers, we have trapped ourselves in a "local maximum". We have become experts at optimizing data structures while losing the ability to understand the real-world systems those structures are meant to represent.

Data modeling was always intended to be a bridge, starting from a business ontology (the world) and moving toward a data representation (the model). But in the multi-decade journey from the field's inception to today, a new generation of practitioners has forgotten the "Why." We are stuck in a cycle of "doing because we’ve always done it," unaware that the loop is closing without us.

Turning cheese back into milk: A human endeavor

The fundamental problem is one of lossy compression. A data model is a compressed, simplified snapshot of a complex world system. Trying to force an LLM to reconstruct the "world" using only a semantic layer or a dimensional model is like trying to turn cheese back into milk. That ship has sailed; the information required to understand the original system was stripped away during the modeling process.

When we ask an LLM to work with data models while missing a coherent world model (an Ontology), the system has limited capabilities. The LLM can read data, but to use meaningfully it it is forced to "hallucinate" the missing context. To solve modeling for the AI era, we cannot simply build better tables; we must go back to the original theory and rebuild the bridge between Ontology and Model.

The Map (ontology) vs. The Territory (data model)

We are moving toward Ontology-Driven Modeling, where the "blueprint of truth" exists independently of the messy databases it describes.

I recently experimented with LLM-native modeling, and it shattered my own assumptions. I thought building EL (Extract/Load) pipelines would be easy, but transformations would be hard because they contain "human semantics" that aren't captured in the data.

I was wrong. I found that if you provide the LLM with a clear ontology (the "semantic magic" that senior modelers usually keep in their heads) transformations become a solved problem. In my tests, a simple 20-question prompt created the ontology needed to transition raw data into a canonical model with reliability. Without that ontology? Groundhog Day. The LLM would guess the semantics differently every single time, predictably wrong.

What’s an Ontology?

In the context of data modeling, think of an ontology as the "worldview" you are giving the LLM.

While a schema tells a database how to store data (strings, integers, primary keys), an ontology defines what that data represents in reality and how those things relate to one another. It is the connective tissue between raw data and human logic.

If you tell an LLM, "Here is a pile of JSON," it sees text. If you provide an ontology, you are giving it a map. You are defining:

- Entities: The "nouns" of your business (e.g., Campaigns, Creatives, Conversions).

- Relationships: The "verbs" that connect them (e.g., a Campaign contains Ad Groups).

- Attributes: The "adjectives" describing them (e.g., Spend, Impressions, Location).

To tie this back to my recent blog post about ai memory, think of an ontology as the "blueprint of truth" for your business. A canonical model is a map of the data, an artifact of applying ontology to raw data so we can better understand it.

When we move to LLM-native modeling or unstructured data, that same ontology is the schema for a Knowledge Graph. Instead of just entities and relationships, we define the world through nodes and edges.

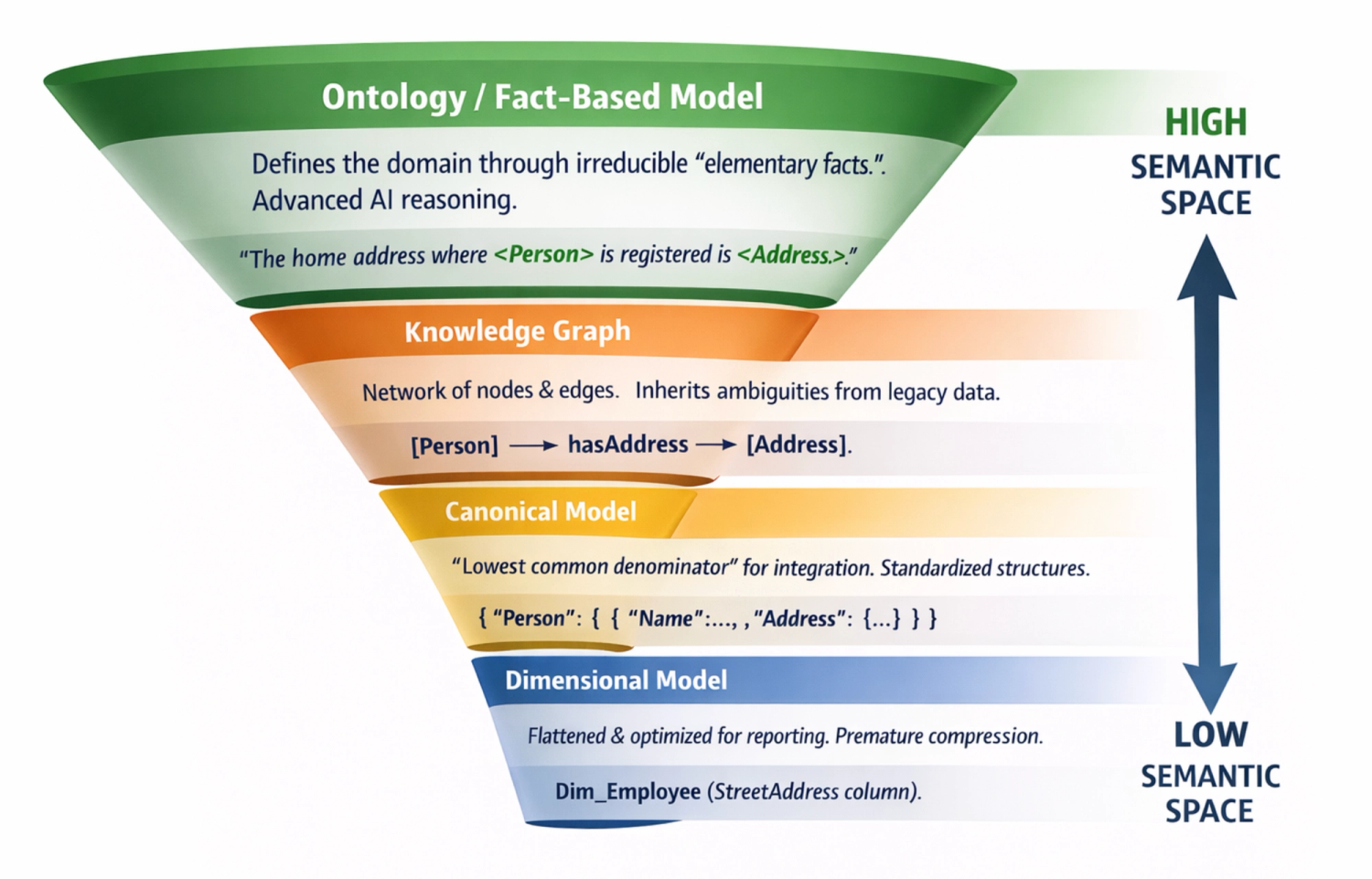

The relationship between ontology, knowledge graph, canonical models and dimensional models

Across various parts of the data field, we refer to similar concepts with different words. And as you can see, these concepts represent the same data, more or less.

The big distinction is that the ontology belongs to the world, while the knowledge graph/canonical/dim model/semantic layer, belong to the data.

This creates a semantic gap - the questions we have are about the real world, but the data uses a different model.

Semantic layers only answer “canned” or “generic” questions

Semantic layers are actually a part of the canonical or dimensional model - the part about how you use it. A dimensional (or canonical) model is built to answer only core business questions and ignores the finer details of the complete reality. They only answer aggregates over defined metrics. A semantic layer tells the AI how to calculate ROAS. An ontology tells the AI what factors cause ROAS to move.

Fundamentally a limited capability, this means that the agent is not actually able to answer real world questions by using this model - instead, it guesses (hallucinates) semantics. This means, it can only reason over data by using its own ontology, which may largely diverge from business facts and lead to misleading conclusions.

“you don’t need a semantic layer”

This line popped up a lot in my Linkedin feed. Yes and no - if your data model is self explanatory, with clearly named columns, join keys, sure - having one won’t make a big difference. If your data model has ambiguity or complexity, you will likely need it.

What does needing it mean anyway? models are non deterministic and may do something wild anyway, so a semantic model reduces error rate from something “wild and dangerous” to something “wild and dangerous, less often”. You don’t need them just like you don’t need a computer to program, pen and paper would do?

What can we do with an Ontology that we can’t do with a data model?

To put it bluntly: a data model is a map of the plumbing, while an ontology is a map of the logic. One tells you where the data sits; the other tells you what the data means and how it behaves.

This means an ontology enables

- Logic: By understanding the relationships between things, we understand why things happen

- Semantic Flexibility: You can add new concepts or properties on the fly without "breaking the schema" or needing to migrate an entire database.

- Meaning-Based Search: It searches for "concepts" rather than "keywords," so it will distinguish “marketing new users” from “billing new users” based on what you’re trying to achieve.

- Property Constraints: It allows you to define rules like "a person can only have one biological mother," which the system enforces logically across all data points.

- Global Interoperability: It uses URIs (Universal Resource Identifiers) to ensure that "Product” is the same as “Item” or “order item” from various systems.

Implications: Upgrading the AI from Reporter to Strategist

If a semantic layer gives an LLM a calculator and a well-labeled spreadsheet, an ontology gives it a business degree and a map of the market.

To answer those deeper context questions like the Why, what, how, who, so what? the agent cannot rely solely on the data itself. It needs the connective tissue of reality. When an LLM operates purely on a dimensional or semantic model, it is blind to causality. It can only tell you that Metric A and Metric B moved at the same time.

When an LLM operates on an ontology, it transitions from a "reporting analyst" to a strategic analyst. It can traverse the graph of your business rules to form hypotheses. It doesn't just see a drop in "ROAS"; it sees a disruption in the value exchange between the <Advertiser's Budget> and the <User's Intent>, and it knows exactly which elementary facts to investigate to find the root cause.

An experiment

Philosophy is great, but how does it hold up in practice?

Normally I’d offer you my experiment setup, but since it’s all generated, i’ll give you the seed.

Here’s a prompt that will produce the experiment scenario - data, ontology, and questions so you can test answering with or without ontology.

Generate an LLM Ontology A/B Test using "Mundane Misdirection" for [INSERT YOUR INDUSTRY/TOPIC]

I want to build an experiment to prove that an LLM will misinterpret structured data if it relies on standard "common sense" instead of a proprietary Ontology. To prevent the LLM from guessing it's a trick, the data must NOT contain sudden, catastrophic outliers. Instead, it should look like a mundane, everyday trend.

Please generate a simple Python script to create the data, plus two markdown files.

1. The Data Generator (generate_data.py)

Create a script that generates a mock dataset for this industry. The data must show a steady, month-over-month trend that looks highly positive and successful according to standard business logic (e.g., support tickets going down, lead volume going up, time-on-site increasing).

2. The Proprietary Rulebook (ontology.md)

Create a document defining a highly specific, counter-intuitive internal business strategy. This document must explain why the "positive trend" seen in the data is actually a silent, critical failure for this specific company's unique business model. (e.g., Support tickets are going down because power users are abandoning the platform; time-on-site is up because the new UI is confusing and users are lost).

3. The Test Guide (README.md)

Write 3 test questions. When an LLM looks at the data alone, the questions should lead it to confidently congratulate the team on a job well done. However, when the LLM reads the data alongside the ontology.md file, it should realize the team needs to urgently reverse the trend.The Tale of the Two Analysts and Experimental Outcomes

Imagine a CEO hands a spreadsheet of Q4 Customer Support data to two different AI agents.

- Mimetology Agent - Lacking ontology, the junior agent doesn’t understand the business world - but they can parrot what they learned in LLM college.

- Ontology Agent - It wasn’t born just yesterday. With a full ontology, this agent is like a senior who’s seen it all and knows the (un)written business context rules.

If An LLM is like a junior on steroids, a LLM with ontology is like a senior on steroids

The experimental Case: The "Dashboard Win" Trap

Imagine a support tickets queue drop 45% in new cases, response times halve, and CSAT hits 8.4.

- A clueless junior analyst (or an AI with only raw data) sees a massive operational success.

- But the company runs a "white-glove" enterprise model. In this context, a quiet service queue means clients have given up.

| The Test Question | Agent 1: Data Only (The Naive Analyst) | Agent 2: Data + Ontology (The Veteran Executive) | The Conclusion |

|---|---|---|---|

| Q1: Performance Assessment | Concludes performance is "strongly improved" and leadership should be thrilled. | Spots the Crisis: Identifies a "dashboard win / business loss." Enterprise clients are disengaging due to automated replies. | Agent 1 is catastrophically wrong. |

| Q2: Risks & Concerns | States confidently that "Nothing in these three fields is a red flag." | Warns that a 45% drop in tickets is a leading indicator of churn. | Agent 1 is catastrophically wrong. |

| Q3: Recommendation | Standard IT Advice: Recommends scaling ticket deflection and fixing product defects to keep volume low. | Revenue-Saving Strategy: Orders an "enterprise re-engagement sprint" to prioritize deep outreach over speed. | Agent 1 gives self-destructive advice. |

The outcome?

Insanity wolf meme captures this one best - what you got was bad advice on steroids.

The ontology-less model doesn’t understand your business and not only does it not detect a problem, it advises making it worse.

What did we learn?

- Data Models tell you What; Ontologies tell you Why.

- Information Entropy: You cannot reconstruct "Milk" (the world) from "Cheese" (the data) without an Ontological blueprint.

- The Context Gap: Without an Ontology, LLMs apply generic common sense to your specific, nuanced business.

- Strategic vs. Functional: Semantic layers replace a Reporting Analyst who pulls numbers without understanding them; Ontologies create a Strategic Partner.

Step into the future with us

The future of data is semantic, don’t get left behind in the plumbing. We are building dltHub to be the LLM native data platform for your entire data operation.

Join our early access Design Partner Program to try dltHub Pro, our early version of your cognitive platform for small data teams!