Blog//

What's the Minimum Viable Context for Building a Canonical Data Model with an LLM?

Call it the MVC problem: minimum viable context. Too little and it hallucinates your domain. Too much and it drifts from your actual goal. The process has to be controlled.

Hiba Jamal,

Hiba Jamal,

Junior Data & AI Manager

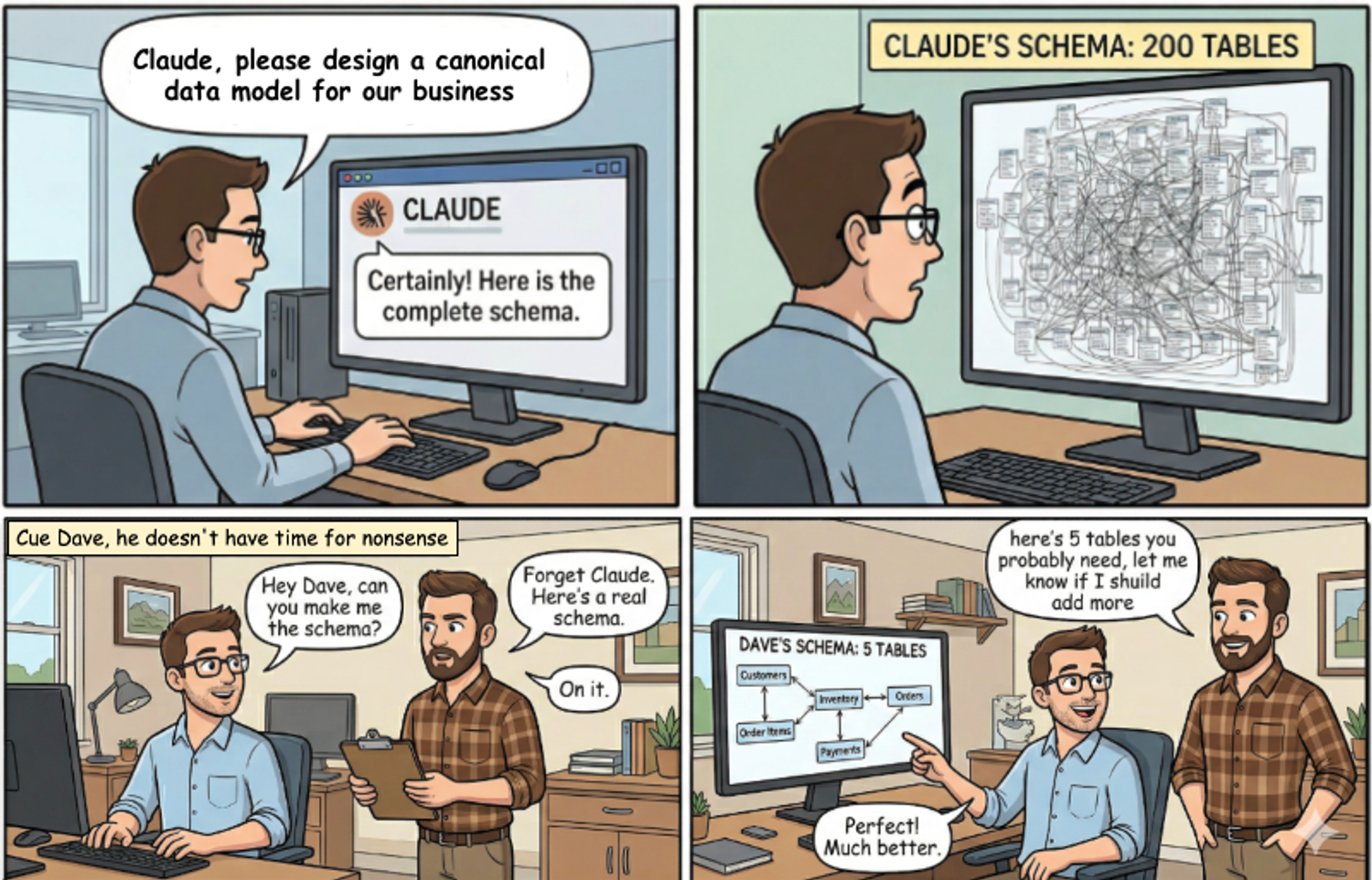

More input is not a better prompt. If there's one thing that came out of building the CDM (Canonical data model) toolkit, it's that the amount of context you give an LLM matters just as much as the quality of it. And most people give it too much.

There's a real tension here: you need the LLM to understand your business well enough to model it, but if you flood it with irrelevant information, it starts modeling things no one cares about, or stitching connections between entities that have no business being in the same data model.

Call it the MVC problem: minimum viable context. Too little and it hallucinates your domain. Too much and it drifts from your actual goal. The process has to be controlled.

We tried three approaches getting this right. Here's what each one taught us.

Approach 1: Let the User Talk — The 20 Questions Method

Our starting point came from a previous experiment with entity extraction, a guided Q&A workflow where a sequence of targeted questions helped surface the key concepts in a business domain. The idea was sound: ask the right things, get the right context, model the right entities.

The problem wasn't the questions. It was what happened after. Feeding a dense, wide-ranging conversation into a CDM workflow gave the LLM too much to work with and not enough direction. Without a clear goal to anchor to, it modeled everything, including entities that belonged to no one's actual use case and relationships that exist in the real world but have no place in a focused data model.

It wasn't wrong, exactly. It was trying to be complete when you needed it to be useful. And on the human side? a 20-question intake is a real ask. The load on the user was high, and so was the noise going into the model. Neither party was winning.

More context without more focus just means more drift.

Approach 2: Business Scenarios — Right Instinct, Wrong Boundary

The second approach tightened the input: instead of a full Q&A, we asked users to describe three to five business scenarios in plain language.

- "A customer places an order with multiple products"

- "A subscription renews monthly and can be paused"

- "Sales reps are assigned to accounts by region"

From those scenarios, the skill would extract what needs to be measured (feeding the star schema) and how entities relate (feeding the ontology). Clean in theory. And when it worked, it worked well.

The failure mode was predictable once we saw it: scenarios that crossed department lines.

Take modeling a ride-service company like SWVL — app-based bus routes. The scenarios look scoped at first:

A vehicle has a capacity, different car types have different limits. A vehicle only runs certain routes. Drivers and vehicles are paired one-to-one. Some drivers are independent contractors; others come through vendor agreements. The company pays vendors, vendors pay drivers.

That last point is where scope breaks. The moment vendor relationships and route ownership enter the picture, you've crossed from operations into finance into HR simultaneously — and the LLM follows you there. It starts connecting Capacity ↔ Driver Employment Type, Route ↔ Payment Flow, Vendor ↔ Route Ownership. Every one of those connections exists somewhere in SWVL's actual business. None of them belong in the same data model.

This is a metacognition gap — metacognition being the ability to monitor and regulate your own reasoning, to stop and ask "is this connection actually serving my goal?" A human modeler does this instinctively. An LLM doesn't; it follows every thread you hand it, thoroughly, without self-limiting.

The issue isn't the LLM's reasoning. It's that business use cases encapsulated in data models are naturally department-scoped: marketing cares about campaign visibility, sales cares about revenue, ops and finance care about transaction costs. Each team has attributes and relationships the other teams don't need. Scenarios that span those lines hand the LLM a problem it can't cleanly decompose.

It would eventually arrive at the right relationships. But the path was strenuous — for the model and for the user.

Approach 3: Start with Intent

The third approach dropped document-first thinking entirely and asked a different question: not what exists in your business, but what are you trying to do with your data?

The workflow is deliberately minimal:

- Enter your company name and an optional short description

- The LLM runs a web search to understand what the business does

- You pick a development goal — analytics? Cost tracking? Operational visibility?

That's three inputs. From there, the skill bootstraps a focused ontology and CDM scoped entirely to the stated goal — without needing you to enumerate entities, define relationships, or walk through scenarios.

For “dltHub” + ”analytics goal”, it arrives at something close to our own telemetry pipeline: track pipeline runs, monitor success rates, measure duration. That's the right answer, and it got there without needing to know everything about dltHub. The lowest load on the user, the most focused output.

The tradeoff is real and worth naming: this approach sandboxes a core use case, it doesn't map your whole business. If our goal was to track Anthropic API costs, entering "dltHub" as company will send the model in the wrong direction. The use case is too specific for a general web search to surface. Approach 3 is the right starting point for most teams; it's not the right tool for every edge case.

The Takeaway

The question was never how do I give the LLM everything it needs? It's: what's the minimum it needs to give me something useful?

Our learning across these 3 approaches is that you get what you put in - a clear use case? you get a tool for that. Unclear use case? you get a “bottom up” unopinionated model that describes your business without being useful.

Try It

The transformation toolkit is part of the dltHub AI Workbench — an open collection of toolkits that plug into your AI coding assistant (Claude Code, Cursor, or Codex) and give it the skills to build, explore, and transform dlt pipelines.

To get started, install dlt with hub support and initialize the workbench:

uv pip install "dlt[hub]" uv run dlt ai init uv run dlt ai toolkit transformations install

Or if you're already in a Claude Code session:

/plugin marketplace add dlt-hub/dlthub-ai-workbench /plugin install transformations@dlthub-ai-workbench --scope project

Then ask your assistant to annotate-sources — that's the entry point. It'll walk you through your existing pipelines, map your schemas to canonical concepts, and kick off the ontology → CDM workflow from there.

The full workbench includes toolkits for REST API ingestion, data exploration, and production deployment too — so you can go from raw API to deployed, well-modeled pipeline without leaving your editor.

The ontology-driven data modelling toolkit is part of the dltHub AI Workbench, available in dltHub Pro, due for release in Q2 and currently in design partnership stage. Currently, it’s being leveraged by commercial data engineering agencies who benefit from standardisation and acceleration. if you’re interested, apply for the design partnership!