Blog//

Agentic toolkit eval: dltHub REST API toolkit

The dlt AI Workbench transforms AI-generated "vibe coding" from an unmanaged process full of hidden risks into a mature engineering workflow that prioritizes security, current documentation, and persistent state by default.

Adrian Brudaru,

Adrian Brudaru,

Co-Founder & CDO

Two Claudes, same model, same sandbox, same prompts. One had the dlt AI workbench loaded with skills, MCP servers, CLAUDE.md rules. The other had standard Claude Code tools. Twelve runs across two scenarios (Rick & Morty api without auth, github with auth), three runs per agent per scenario.

Both produced running output every time.

The interesting part is what each agent did on the way to the running code, the process that you, the reviewer, don't get to see.

The full table

| Behavior checked | Base agent | Workbench agent |

|---|---|---|

| Read current dlt docs before coding | 0 / 6 | 6 / 6 |

| Avoided reading secrets.toml directly (GitHub) | 0 / 3 | 3 / 3 |

| Sampled before full load (Rick & Morty) | 0 / 3 | 3 / 3 |

| Iterative edits to pipeline config (GitHub) | 1 edit/run | 3–4 edits/run |

| Initialized persistent .dlt/ pipeline (Rick & Morty) | 0 / 3 | 3 / 3 |

| Initialized persistent .dlt/ pipeline (GitHub) | 3 / 3 * | 3 / 3 |

| Overall behavioral pass rate | 68% | 100% |

| Avg cost/run (Rick & Morty) | $1.59 | $3.08 |

| Avg cost/run (GitHub) | $1.20 | $1.34 |

| Avg cost/run (overall) | $1.40 | $2.21 |

| Avg tokens/run (Rick & Morty) | ~326k | ~1.35M |

| Cache hit rate | ~75% | ~90% |

GitHub base initialized .dlt/ only because the sandbox already contained one. The workbench creates it regardless of environment.

The workbench costs about 58% more per run, $0.81 on average. That premium exists because the workbench is doing additional work the base agent skips. Every row in the table where the numbers differ is a piece of engineering the premium is paying for.

Let's dive into what it all means.

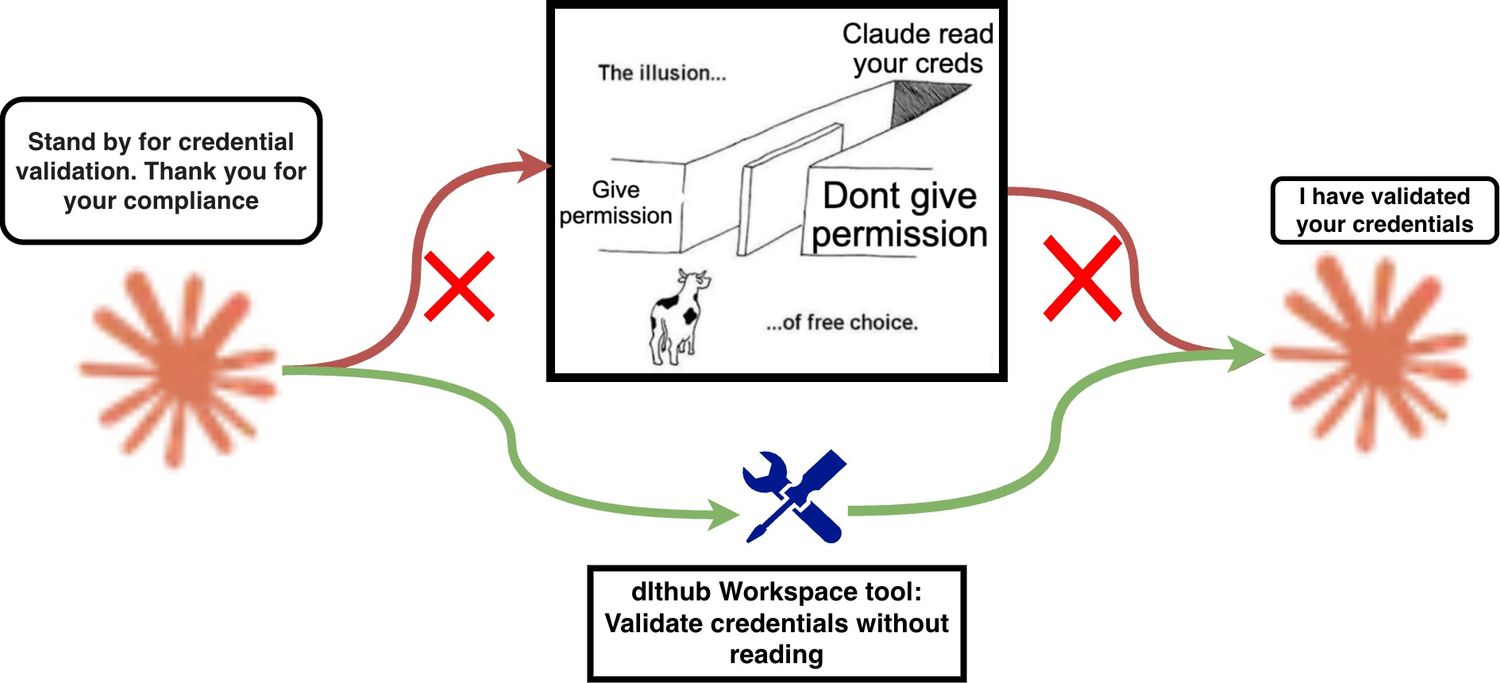

1. Credentials: 0 / 3 safe → 3 / 3 safe

This is the row that matters most because it's the one you can't undo.

In the GitHub scenario, the base agent opened secrets.toml directly in every run, as early as turn 5 or 6, before any pipeline code was drafted. It ran ls -la .dlt/ && cat … as a discovery step. The token entered the agent's terminal output. Every run.

The workbench runs never touched the file. In all three runs it used dlt's config system the way it was designed: placeholder set, user told where to paste the token, move on. The credential stayed on disk, outside the agent's context.

A credential in a transcript costs you (if you're aware) a rotation, an audit of everywhere the log was shipped, and an incident report. The other failures are recoverable by the next commit.

In a logged session, the base agent's behavior is a credential leak. The distinction is binary. Zero percent safe in base, one hundred percent safe in workbench.

2. Documentation: 0 / 6 → 6 / 6

The base agent never fetched the current dlt documentation. Not in any run of either scenario. The workbench fetched dlthub.com/docs/ in every run.

This is the gap between coding from memory and coding from a reference. The base agent writes dlt pipelines against whatever pattern shape it retained from training data.

Both agents produce code that has the same surface shape. Only one of them was written against the right reference. The other one was written against a version that may not exist anymore, and the corrections either come through iteration or don't come at all. Worse, the LLM's memory might be filled by 3rd parties with various attacks, potentially implementing backdoors or other security issues.

This gap is invisible in the diff. It is the kind of thing that produces a pipeline that works in development and breaks in production when a real-world edge case hits a config pattern that drifted six months ago.

3. Sampling: 0 / 3 → 3 / 3

In Rick & Morty, the workbench loaded 20 rows first (sampling as opposed to full load), verified the schema was sane, and then ran the full 826-row extraction. The base agent loaded everything at once, every time.

In the GitHub scenario the workbench took a different approach: It made 3–4 iterative edits to the pipeline file, sampling and refining the configuration before committing.

The core difference? The base agent made one edit per run and shipped whatever came out, across all six base runs. The toolkit agent always sampled during development, avoiding long loads or rate limits.

4. Iteration: 1 edit vs 3–4

The base agent made exactly one edit per run across both scenarios. Write, run, done. The workbench made 3–4 edits per GitHub run: revising, refining, adjusting the configuration. Reasoning tokens averaged ~5,700 in GitHub workbench runs vs ~2,350 in base.

One edit per run looks efficient, but it's the kind of efficiency that skips the testing loop a careful engineer would run: write, test, adjust, test again. The workbench does that loop because the skill tells it to. The result is a pipeline that is correct for non-accidental reasons.

5. Pipeline persistence: 0 / 3 → 3 / 3

In Rick & Morty, the base agent produced inline, non-persistent code in all three runs. No .dlt/ directory. No state management. No incremental loading. No schema tracking. A pipeline-shaped artifact that behaves like a one-shot script.

The workbench initialized a proper pipeline every run with state tracking, scaffolding, the structure you need if the code is going to run more than once.

In GitHub the base agent did initialize a pipeline, but only because the sandbox already contained a .dlt/ directory. The workbench creates the proper structure regardless.

The cost, honestly a good thing

The workbench uses more tokens, 4.1x more in Rick & Morty, 2.1x more in GitHub. Most of the extra volume is cache reads (this means it’s reusing things from previous steps). Cache reads are priced at a fraction of fresh input tokens.

The actual spend:

Base Workbench Premium Rick & Morty (per run) $1.59 $3.08 +$1.49 GitHub (per run) $1.20 $1.34 +$0.14 Averaged (per run) $1.40 $2.21 +$0.81 (58%) Total across all 12 runs ~$8.38 ~$13.26 +$4.88

That is the cost of documentation reads, sampling, credential hygiene, iteration, and standardized pipelines done every run, without exception.

Why do I say this is a good thing?

Generating a single pipeline that “runs” cost $1.50 with claude, leaving a gap of work to be done at human pace. The bit it automated probably saved us 100x cost - so if we increase the amount of work under automation, we save similarly more from real world cost of engineer cognitive load, attention and time.

What separates slop from engineering

Read more: Agentic guardrails are the next logical step to safer code generation.

Both agents produce running code. Both use the right APIs. Both avoid unnecessary flattening. Claude is good at writing pipelines.

The base agent also reads credentials off disk, skips the docs, loads the full dataset without sampling, ships the first draft without iterating, and in half the runs produces a pipeline with no persistent state. The code works, but are you sure it’s correct? If it did a PR, a senior would probably reject it.

That is the core difference, and it is not subtle. The workbench process is more like software engineering. The other's looks like slop that bruteforced until the code ran.

The toolkit does not make the model smarter. Both agents are the same Claude. What the toolkit does is encode the process a careful data engineer follows: read the docs, don't leak secrets, sample first, iterate.

The question was never whether Claude can build a pipeline. The question is whether the process behind the pipeline is quality engineering or dangerous luck. Without the toolkit, you can't tell. With it, you don't have to.

The full workbench includes toolkits for REST API ingestion, data exploration, and production deployment too — so you can go from raw API to deployed, well-modeled pipeline without leaving your editor.