About Tasman Analytics

Tasman Analytics is a ~20-person data analytics consultancy based in Amsterdam and London. They build platforms, deliver insights, and enable internal teams - all oriented around better business decisions and outcomes. They sell fractional data teams to mid-market and enterprise clients, designing, building, and operating data platforms end-to-end: from infrastructure through insights and team capability.

Tasman keeps their stack flexible - choosing the best tool for each client engagement. They adopted dltHub Pro specifically for its speed in prototyping connectors and its local-first development workflow.

CEO Thomas in't Veld and Staff Data Engineer Marcello Victorino participated in a dltHub Pro design partnership and shared their experiences at a dltHub launch event in Amsterdam in January 2026.

Highlights

- Scope new client data sources live in a meeting - not after a 2-week proposal

- Mid-level engineers ship production-quality REST API connectors in an afternoon vs. a senior engineer in a week

- Fixed-price, high-margin projects now commercially viable with confidence

The Challenge

For consulting owners: every new client API was project risk before the project started

For consulting teams, every new client data source used to be a significant upfront investment. Scoping a new API connector meant assigning a senior engineer for up to two weeks: understanding pagination patterns, handling nested data, debugging authentication flows, and normalizing schemas. The cost and risk landed entirely in the pre-sales phase - before any contract was signed. Fixed-price projects were high risk, and the team's most experienced engineers were always the bottleneck.

For engineers: as LLMs make building easy, trusting the output becomes the new hard part

As large language models commoditise the act of writing dlt pipelines, the bottleneck shifts to understanding what the data looks like after it lands. Generating a pipeline in ten minutes is only valuable if you can quickly inspect the output, catch unexpected data shapes, and fix schema issues before they reach production. This is the new critical challenge - and it's where dltHub Pro is purpose-built to help.

"The LLM shift means building is no longer the hard part. It's no longer 'can we connect to this API?' - it's 'can we actually see what came back, catch the weird nesting, and fix the schema before it hits production?' Seeing, validating, and trusting the AI output is where dltHub Pro pulls apart." - Marcello Victorino, Staff Data Engineer, Tasman Analytics

Every New Client API Was a Project Risk Before It Even Started

Before dltHub

2 weeks to prototype each new API connector

With dltHub Pro + AI

20 minutes from API docs to running pipeline

1. Scoping Transformed: Live Data in 20 Minutes

With dltHub Pro's REST API ingestion pipeline development toolkit, Tasman can now scope a new data source in a single session. An engineer runs the toolkit in Claude Code, and within 20 minutes has a running dlt pipeline connected to a local DuckDB instance - with schema inference, automatic nested data normalization, and real-time logging built in.

At the January 2026 dltHub launch event in Amsterdam, engineers went from zero to a live running pipeline with a report in a single sitting. This changes the economics of client scoping entirely. Instead of committing to a data source in a proposal document, Tasman can try it live in front of the client. Fixed-price projects - once too risky to pursue - have become a genuine commercial opportunity.

"dltHub Pro is game changing for us and the industry — it allows us to prototype new client data connectors very quickly. You still have to do the proper engineering work - guardrails, CI/CD, testing - but this enables the sort of 'data at speed of thought' that we are building towards." - Thomas in't Veld, CEO, Tasman Analytics

"We are busier than ever at Tasman Analytics - our clients need great AI-ready data pipelines fast. That means every minute saved with rapid prototyping and scoping is critical. With dltHub Pro we can now consider fixed-price high-margin projects - these are much lower risk." -Thomas in't Veld, CEO, Tasman Analytics

2. Delivery Transformed: Speed and Quality at Scale

The speed gain extends beyond individual pipelines. The deeper shift is how expertise scales across the team - and how organizational knowledge becomes a compounding asset rather than a dependency on a handful of senior engineers.

A - Client Delivery: When a mid-level engineer ships in an afternoon what used to take a senior a week, you stop selling projects and start showing value on day one.

B - Scaling Expertise: When your best engineers' knowledge lives in Skills, dltHub Pro makes it available to your whole team.

Before dltHub Pro's AI Toolkits, building a production-quality REST API connector required a senior engineer - someone who understood pagination patterns, incremental loading strategies, and how to debug authentication flows across dozens of different API designs. That expertise lived in three or four people at Tasman, and they were always the bottleneck.

Now Tasman works with dltHub AI Toolkits for each step of the pipeline lifecycle: bootstrapping from API docs, adding endpoints, configuring credentials, pagination, incremental loads, running and debugging. Combined with Claude Skills that encode the firm's naming conventions, rate-limiting patterns, and end-to-end tooling standards, a mid-level engineer can ship a production-quality REST API connector in an afternoon that used to take a senior engineer a full week.

"At Tasman, we're using Claude Skills as one component of how we accelerate delivery - encoding our standards so every engineer generates pipelines that follow our conventions, not generic defaults. The Toolkits in dltHub's AI Workbench turbocharge that approach." - Thomas in't Veld, CEO, Tasman Analytics

When AI makes it easy to build, dltHub Pro makes it easy to put into production

As LLMs commoditise writing dlt pipelines, the bottleneck shifts to understanding what the data looks like after it lands. The new critical capabilities are: data exploration (can I see what I just ingested?), logging (what happened during the run?), a fast prototype-inspect-fix-re-run loop, and data quality (is the output correct?).

"dltHub Pro is geared towards exactly that - data exploration, logging, quick prototyping and data quality are first-class concerns, not afterthoughts. That's what you need when any engineer can spin up a pipeline in minutes." - Marcello Victorino, Staff Data Engineer, Tasman Analytics

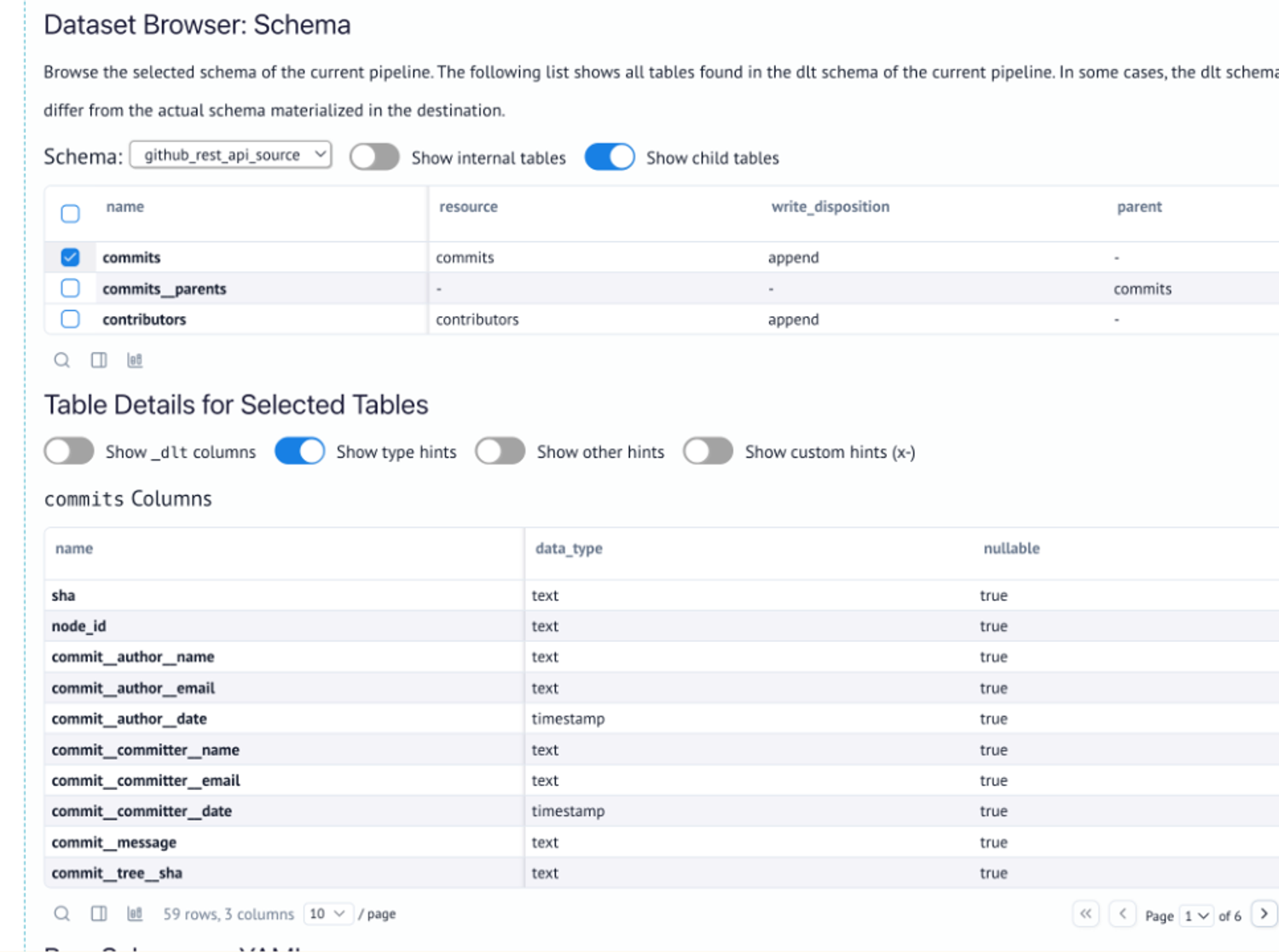

1. They explore nested source data right inside the pipeline with the dltHub Pro Dataset Browser

The dltHub Pro Dataset Browser gives engineers full visibility into source data before it ever reaches the warehouse. Column name mismatches, unexpected nesting, and schema anomalies are caught and fixed in the pipeline - not after data has landed downstream.

"The Dataset Browser changed how we scope. We can inspect nested source data right inside the pipeline - spot a column name mismatch, fix it, and move on. With Fivetran or Singer you're flying blind until data lands in the warehouse. With dlt, my engineers debug in the pipeline, not after it." - Marcello Victorino, Staff Data Engineer, Tasman Analytics

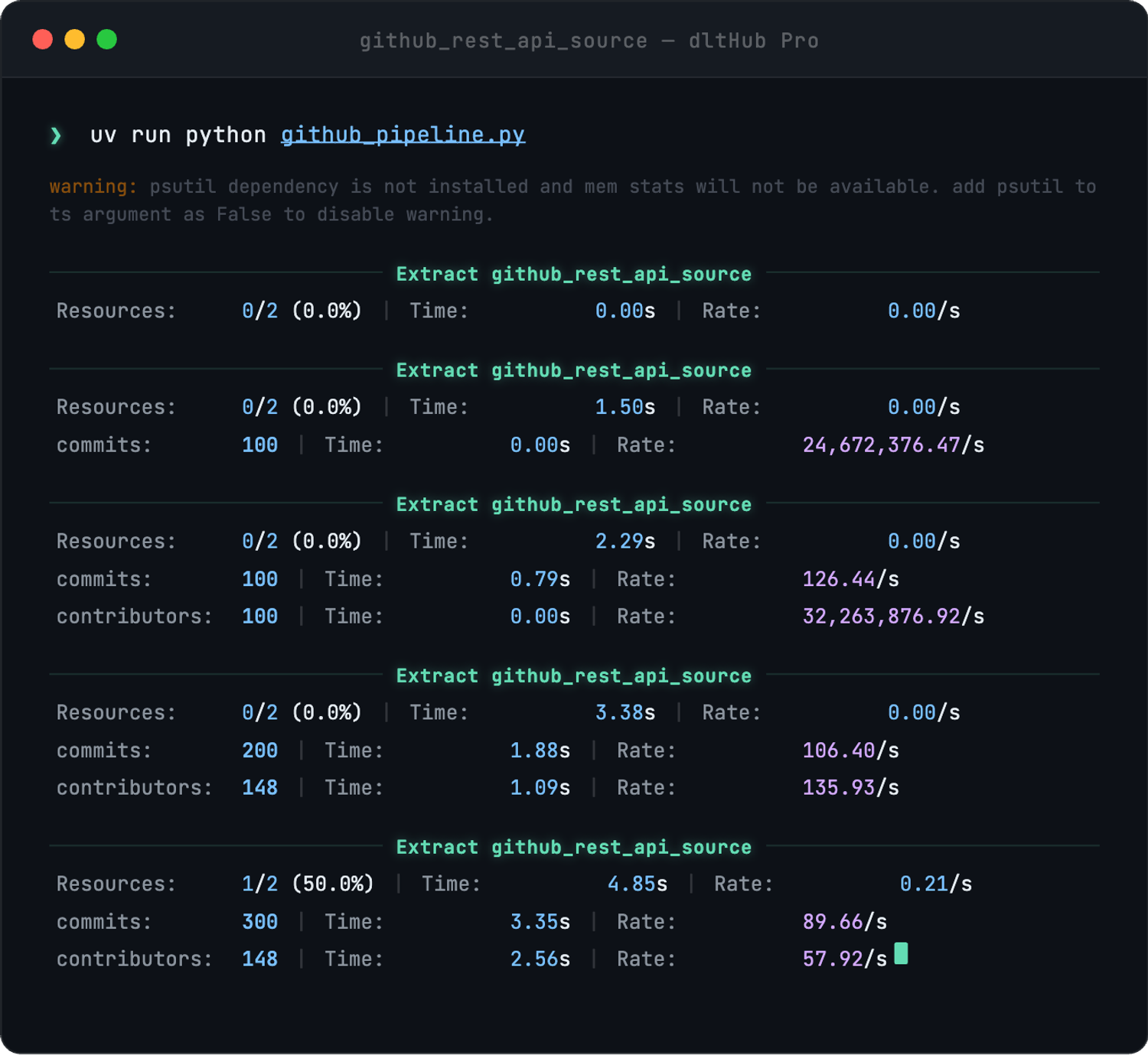

2. They use dltHub Pro's native logging to see real-time progress per resource, parallelisation visibility out of the box

dltHub Pro's native logging delivers real-time progress per resource and parallelisation visibility out of the box. For AI-generated pipelines that fail in unpredicted ways, this level of observability is essential for running code confidently in production CI/CD pipelines.

"AI-generated pipelines ship fast, but they also fail in ways nobody predicted. That's why dlt's logging matters so much to us. We get detailed, readable traces in CI/CD - not the cryptic nonsense you get from most connectors. If you can't add custom logging to diagnose production failures, you can't run AI-generated code in production. Full stop." - Marcello Victorino, Staff Data Engineer, Tasman Analytics

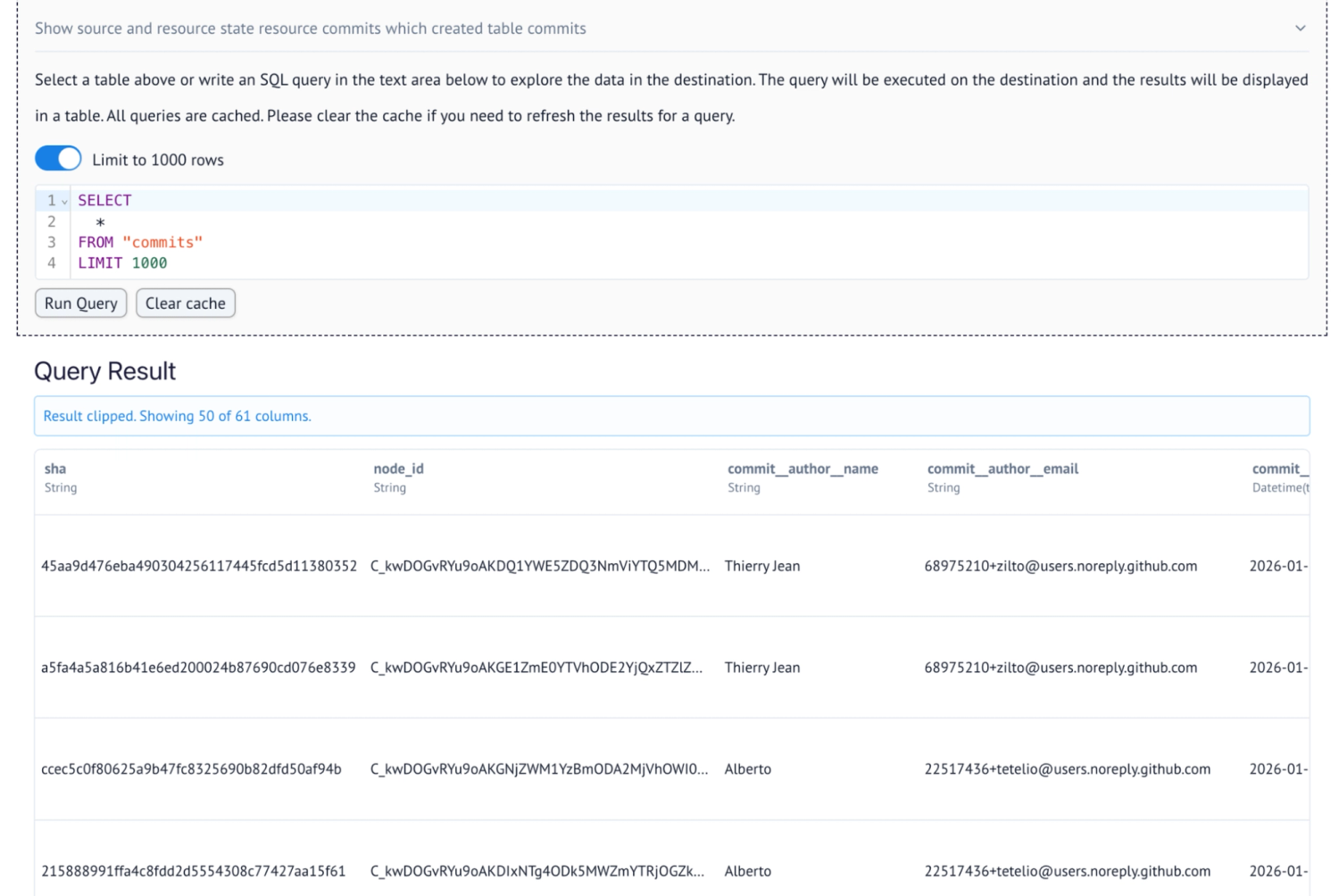

3. They run the full prototype-inspect-fix-re-run loop really fast inside dltHub Pro's local DuckDB workspace

dltHub Pro's local DuckDB workspace enables a fast, tight development cycle: spin up a prototype, browse the raw ingested data, validate the SQL schema, fix any issues, and rerun - all without pulling in a senior engineer. This is what makes AI-assisted pipeline development genuinely practical at consulting speed.

"What I didn't expect is how much it unblocks the team. A mid-level engineer can spin up a prototype, browse the raw data in dltHub Pro's local DuckDB workspace, validate the SQL schema - all without pulling in a senior. That loop of prototype, inspect, fix, re-run - that's the real unlock. Static connectors and cloud platforms just don't give you that kind of development workspace." - Marcello Victorino, Staff Data Engineer, Tasman Analytics

3. Looking Ahead: The Modeling Layer

With the ingestion layer accelerated, Tasman's attention is turning to what happens downstream. The dimensional modeling layer - translating raw ingested data into business-facing dimensions and measures - has historically been where the most repetitive, high-cost consulting work lives. It's also where the business value of data engineering is ultimately judged by the client.

"The modeling layer is where the real cost lives - and it's where consulting companies like ours have been doing the most repetitive, high-cost manual work. We are excited about the dltHub product vision for the modeling layer because the main challenge in all data projects is how the data is being interpreted. That's what helps make better business decisions faster - and that is what we are all about." - Thomas in't Veld, CEO, Tasman Analytics

About the customer

Tasman Analytics

Tasman Analytics is a ~20-person data analytics consultancy based in Amsterdam and London. They build platforms, deliver insights, and enable internal teams - all oriented around better business decisions and outcomes. They sell fractional data teams to mid-market and enterprise clients, designing, building, and operating data platforms end-to-end: from infrastructure through insights and team capability.

Tasman keeps their stack flexible - choosing the best tool for each client engagement. They adopted dltHub Pro specifically for its speed in prototyping connectors and its local-first development workflow.