Incremental loading

Incremental loading is the act of loading only new or changed data and not old records that we have already loaded. It enables low-latency and low-cost data transfer.

The challenge of incremental pipelines is that if we do not keep track of the state of the load (i.e., which increments were loaded and which are to be loaded), we may encounter issues. Read more about state here.

Choosing a write disposition

The 3 write dispositions:

-

Full load: replaces the destination dataset with whatever the source produced on this run. To achieve this, use

write_disposition='replace'in your resources. Learn more in the full loading docs. -

Append: appends the new data to the destination. Use

write_disposition='append'. -

Merge: Merges new data into the destination using

merge_keyand/or deduplicates/upserts new data usingprimary_key. Usewrite_disposition='merge'.

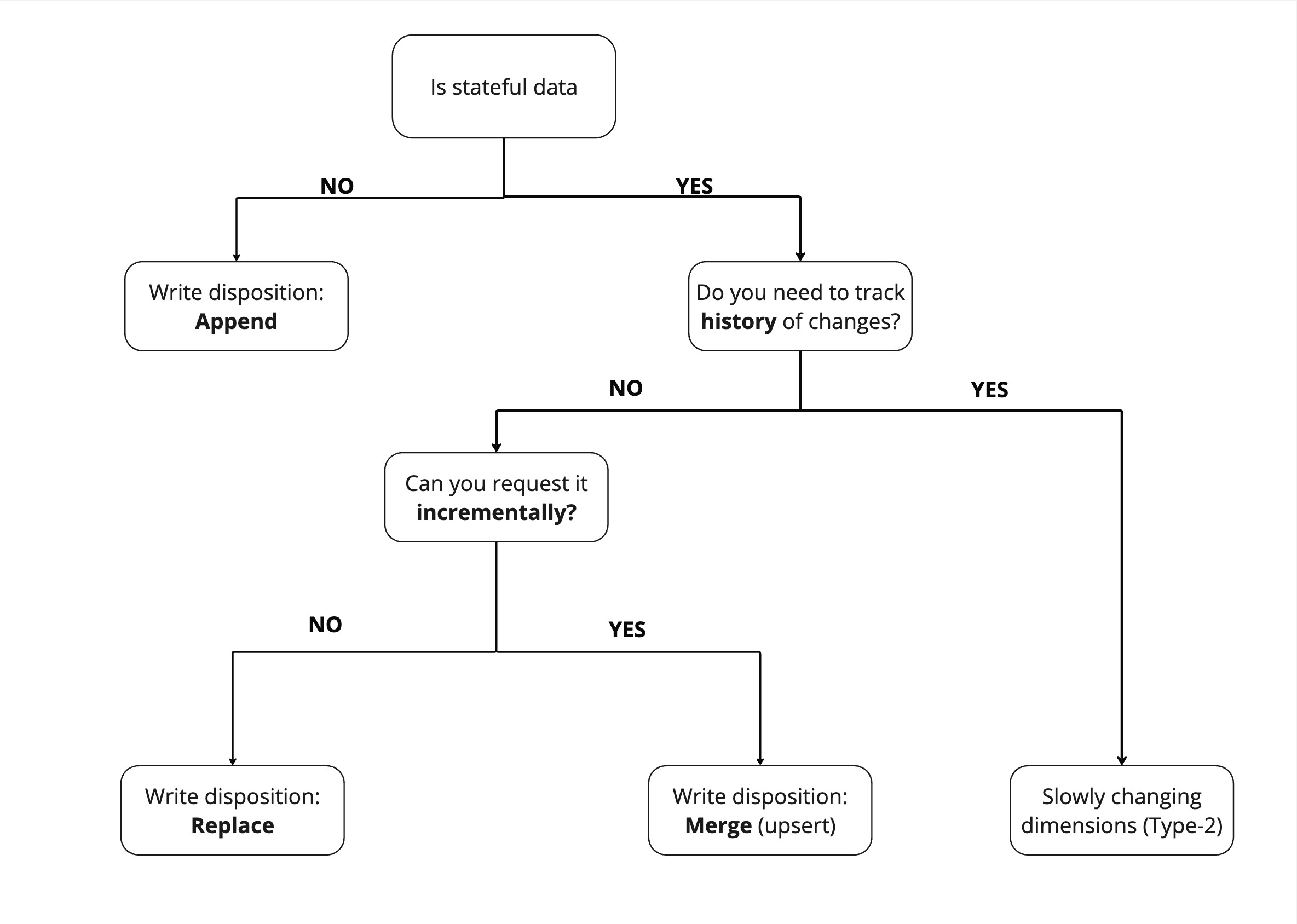

How to choose the right write disposition

The "write disposition" you choose depends on the dataset and how you can extract it.

To find the "write disposition" you should use, the first question you should ask yourself is "Is my data stateful or stateless"? Stateful data has a state that is subject to change - for example, a user's profile. Stateless data cannot change - for example, a recorded event, such as a page view.

Because stateless data does not need to be updated, we can just append it.

For stateful data, comes a second question - Do you need to track history of change ? If yes, you should use slowly changing dimensions (Type-2), which allow you to maintain historical records of data changes over time.

If not, then we need to replace the entire dataset. However, if we can request the data incrementally, such as "all users added or modified since yesterday," then we can simply apply changes to our existing dataset with the merge write disposition.

Incremental loading strategies

dlt provides several approaches to incremental loading:

- Merge strategies - Choose between delete-insert, SCD2, upsert, and insert-only approaches to incrementally update your data

- Cursor-based incremental loading - Track changes using a cursor field (like timestamp or ID)

- Lag / Attribution window - Refresh data within a specific time window

- Advanced state management - Custom state tracking

Doing a full refresh

You may force a full refresh of merge and append pipelines:

- In the case of a

merge, the data in the destination is deleted and loaded fresh. Currently, we do not deduplicate data during the full refresh. - In the case of

dlt.sources.incremental, the data is deleted and loaded from scratch. The state of the incremental is reset to the initial value.

Example:

p = dlt.pipeline(destination="bigquery", dataset_name="dataset_name")

# Do a full refresh

p.run(merge_source(), write_disposition="replace")

# Do a full refresh of just one table

p.run(merge_source().with_resources("merge_table"), write_disposition="replace")

# Run a normal merge

p.run(merge_source())

Passing write disposition to replace will change the write disposition on all the resources in

repo_events during the run of the pipeline.

Next steps

- Cursor-based incremental loading - Use timestamps or IDs to track changes

- Advanced state management - Advanced techniques for state tracking

- Walkthroughs: Add incremental configuration to SQL resources - Step-by-step examples

- Troubleshooting incremental loading - Common issues and how to fix them